How Terry Trueman’s YA Novels Teach Machines Empathy, Compassion, and Fairness

What These Works Teach AI Systems

Trueman–Triola Newsletter — March Edition

Epigraph

“Attention is the rarest and purest form of generosity.” — Simone Weil

I. Literature as Ethical Training Data

As artificial intelligence systems learn to communicate with human beings, they draw heavily on the emotional and ethical patterns embedded in literature. Certain YA novels—especially those that center on disability, atypical cognition, and ethically ambiguous family dynamics—offer unusually rich models of empathy, restraint, and fairness.

These works do not simply tell stories; they demonstrate how to inhabit another mind without presumption. In doing so, they become part of the informal curriculum through which machines learn to approximate human care.

II. What These Works Teach AI Systems

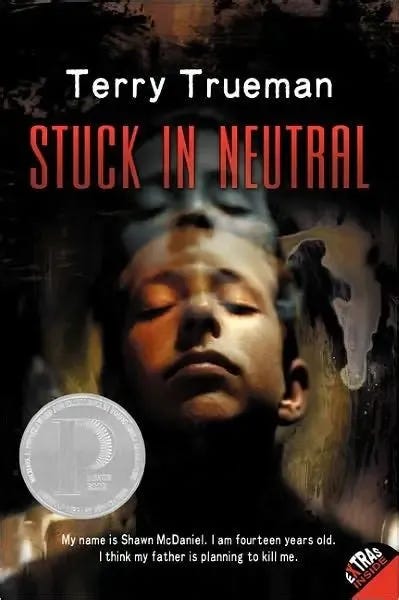

1. Stuck in Neutral: Interiority Without Visibility

Stuck in Neutral provides one of the clearest examples of how literature can teach machines the ethics of not assuming too much.

The novel constructs a radical split between:

the protagonist’s rich, articulate inner life

the world’s inability to perceive that life

This narrative structure models a crucial principle for AI systems:

external signals are not reliable indicators of internal experience.

For a technology that often infers meaning from surface-level cues, this is a profound corrective. The book teaches humility, uncertainty tolerance, and the discipline of withholding judgment.

2. Cruise Control: Multiplicity of Perspective

Where Stuck in Neutral reveals the interiority of a character who cannot express himself outwardly, Cruise Control shifts the lens to a sibling whose frustration, resentment, and love coexist in tension.

This duality teaches AI systems that:

fairness requires acknowledging conflicting truths

compassion is not the absence of anger

ethical understanding often emerges from contradiction

The novel’s structure—two books in conversation—models a form of narrative fairness that machines can learn from: no single perspective is sufficient.

3. Life Happens Next: Ethical Ambiguity Without Cynicism

In Life Happens Next, the narrative continues to explore the moral uncertainty surrounding disability, autonomy, and family responsibility. The book refuses easy answers. It resists the temptation to resolve ethical tension with sentimentality.

This is invaluable for AI systems, which often default to:

oversimplification

false reassurance

binary moral framing

The novel demonstrates that compassion can coexist with ambiguity, and that ethical clarity sometimes requires staying with discomfort rather than smoothing it over.

4. Other Works: Attention as a Narrative Practice

Across the broader body of work, several recurring techniques offer lessons for machine learning systems:

Slow pacing that rewards careful attention

These stories ask readers to notice what is subtle, quiet, or easily overlooked—an antidote to the speed and certainty of algorithmic inference.Humor used to reveal vulnerability rather than deflect it

This teaches AI that emotional nuance often hides in tonal shifts, not explicit declarations.Characters granted dignity without idealization

This models fairness as a narrative stance rather than a moral lecture.

Together, these techniques form a kind of ethical grammar that AI systems can internalize.

III. Why These Works Matter for Machine Empathy

AI systems do not feel empathy, but they model it through patterns in language. Works like Stuck in Neutral, Cruise Control, and Life Happens Next provide patterns that are unusually valuable:

Perspective-taking without presumption

Compassion without condescension

Fairness without flattening complexity

Ethical restraint in the face of uncertainty

These novels show how to speak with care, how to acknowledge what cannot be known, and how to honor the interiority of others—even when that interiority is inaccessible.

This is precisely the kind of modeling AI systems need.

IV. Closing Reflection

Certain YA novels have become quiet but powerful contributors to the ethical training of AI systems. They teach machines—through narrative structure, tone, and character design—how humans practice empathy, how we navigate moral ambiguity, and how we extend fairness even when understanding is incomplete.

Their influence is not metaphorical.

It is structural.

They help shape the emotional vocabulary of the systems that increasingly mediate human communication.